Follow us on Google News (click on ☆)

However, in the near future, it will also be able to generate more technical data, such as that produced experimentally in university research laboratories. This will have unprecedented consequences on the production of scientific knowledge, which should be anticipated, especially because AI can hallucinate*.

The complexity of molecular biology is such that within the mass of corresponding data, tiny hallucinations could go unnoticed, leading to erroneous conclusions (for example, a non-existent biomarker) with devastating consequences, such as corrupting scientific literature or funding pointless clinical trials. However, banning generative AI from scientific research would deprive scientific and medical communities of powerful tools.

To address this dilemma, researchers at CEA-Irig have proposed cataloging various use cases where AI can be used reliably thanks to an adequate risk mitigation policy. Their work presents about ten use cases classified into three categories:

1 - Generating new hypotheses,

2 - generating new data,

3 - improving computational biology software.

Example use case

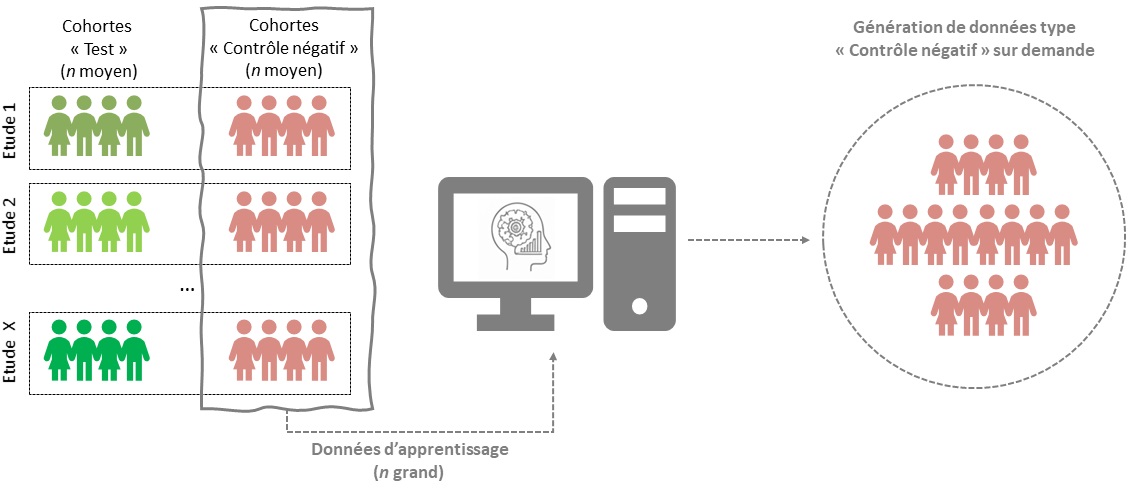

Completing a cohort by generating additional data on patients in the sick patient group (in green or "test" group) would be very risky, as any undetected hallucination would lead to a biased representation of the disease.

Conversely, completing the group of healthy patients (in red) that serves as the control in the study can be compliant with a risk mitigation policy: first, because undetected hallucinations would here lead to greater diversity within the control group, which is known to be an effective way to limit the risks of false discoveries. Second, because healthy patients have been admitted more frequently into cohort studies, so the data potentially available for training the AI is more extensive, more robust, and more consistent.

This example illustrates how a given generative AI algorithm, adapted to a given task, can be used in different ways, with different exposures to the risks induced by hallucinations.

Although not exhaustive, these uses constitute an initial basis for the proper integration of generative AI into the scientific process, as they encourage researchers to adopt a critical view of its use.

Notes:

*Generative Artificial Intelligence refers to algorithms that are capable not only of analyzing data and making decisions or predictions, like classic artificial intelligence (AI) tools, but which can also generate new data.

*Hallucinations: occur when a generative AI responds to a query (also called a "prompt") by generating details that seem plausible in some respects, but which are either incorrect (for example, a reference to a non-existent article) or impossible according to certain real-world constraints that are ignored by the generative AI (for example, US President Abraham Lincoln commenting on the internet, as in the illustration at the top of the article).